The previous blog on conducting a data quality assessment introduced common root causes for issues with the schedule and cost data. Once you have identified the root cause for the data quality issues, the next step is to determine your best options to resolve the source of the problem.

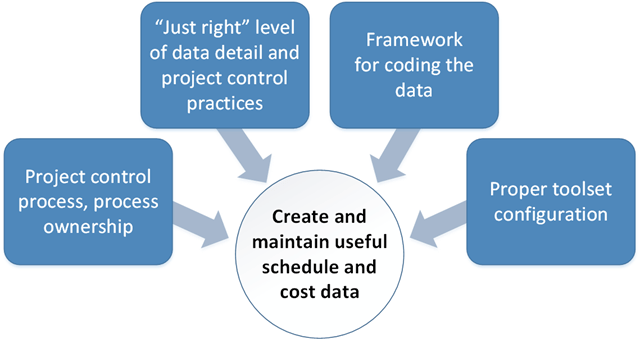

Project personnel play an essential role in creating as well as maintaining quality schedule and cost data for the life of project. You can resolve and prevent data quality issues with a consistent focus on ensuring people have useful guidance and tools to follow preferred practices. It starts with personnel following a disciplined project control process so they routinely create schedule and cost data at the “just right” level of detail with the necessary coding using properly configured toolsets. The image below illustrates these factors. If one or a combination of those factors is missing the mark, it increases the level of difficulty for someone to create and maintain useful data.

Let’s take a look at each of these factors and what you can do to make improvements should you find data quality issues.

Project control process and process ownership. Do project personnel have the guidance they need to create useful data? Frequently they don’t know what they should be doing or they can’t find the guidance they need. Two previous blogs, “Who is your project control process written for?” and “Designing the project control process for project personnel” discusses options for refocusing your project control process and procedures. Verify project personnel have what they need to do their jobs effectively. The guidance is typically a combination of a project control process description, workflow procedures, and desktop instructions.

The workflow procedures are important because they describe the process steps, who is doing what, typical inputs or entrance criteria, and expected outputs or exit criteria. Workflow procedures help to establish process ownership – who is responsible for the data content or executing specific actions. Desktop instructions are important because they focus on helping someone accomplish a specific project control task using a specific toolset.

Do project personnel know how to use the toolsets effectively? Desktop instructions can help as does training. The blog, “A better approach for project control training” discusses creating task focused training that combines process and toolset instruction. It is targeted training designed for specific roles or job functions. The benefit of this approach is it reinforces the process and workflow procedures with hands-on training using the toolsets to accomplish specific project control tasks. It helps to ensure project personnel know how to use the toolsets following established project control practices.

“Just right” level of data detail and project control practices. Is the data at too high a level that project personnel and management don’t have sufficient visibility into what is going on with a project? Is the data at too low a level that it is driving project personnel to avoid certain project control practices because they have become too complicated or time consuming? Are the project control practices implemented on the project appropriate for the scope of work?

This is where scaling your project control process comes into play – the art of determining the appropriate level of data detail, project control discipline, and range of project control practices to match each project’s characteristics. The blog on “Scaling project control practices” discusses creating a framework project managers can use to determine that “just right” level of data detail and control. Project characteristics or attributes guide the decision making process. Typical attributes include the type of work, work scope requirements, contract value, complexity, project duration, resource requirements, risk factors, and contractual requirements.

Framework for coding the data. Are you finding the schedule or cost data are missing coding needed to integrate the data, produce reports, or sort and filter the data? It could be project personnel didn’t know they needed to include specific coding as they were creating the schedule and budget data.

Preventing these data quality issues requires up-front planning for a new project. Start with mapping out what is required to integrate the difference sources of data as well as to sort, select, and filter the data for data views, reports, or data submissions. The goal is to define an integrated data framework or project control data architecture. How do the data pieces fit together? Is there a single source for data with defined interface points? Can you trace the data from the top down or bottom up using the different attributes of the coding framework and get the same answer?

One approach is to produce a project management plan or similar project directive that clearly states expectations as project personnel develop the network schedule and time phased budget data. You could organize the guidelines for establishing the project data by process areas. For example:

- Organizing the work. This includes the WBS, WBS dictionary, project OBS, control accounts, work packages, and planning packages. The various coding structure elements, control accounts, or work packages may also have assigned coding necessary to integrate or interface with other business systems such as accounting. For example, a charge code could be an attribute of a work package or control account. What is the level of detail for control accounts and work packages?

- Scheduling the work. Is an integrated master plan (IMP) a contractual requirement? What are the major project events? What is the level of schedule detail such as a one-to-one or many-to-one relationship between activities and work packages? What is the level of detail for resource loading activities? For example, do the resource assignments reflect a named person, skill code, or summary resource category? What are the activity coding requirements necessary to sort, select, or filter the data? What’s needed to generate the time phased budget data? This typically includes the WBS, OBS, control account, and work package coding. What about other coding necessary to integrate or interface with other systems such as a production scheduling system? Are there any activity duration and float limitations or guidelines? How are level of effort (LOE) activities handled? Is a schedule risk assessment required? What about hand-offs between work teams? Consider producing a schedule data dictionary for more complex schedules to document the coding requirements.

- Budgeting the work. How are you managing the contract budget base, performance measurement baseline, and amount set aside for management reserve? The time phased budget plan data produced from the schedule data should reconcile with the total budget values for the project. Consider including a cross reference map to document how the schedule activity and resource assignment coding determines the control account, work package, and element of cost codes for the budget data. How is the amount set aside for management reserve determined?

- Risk and opportunity management. Are there specific requirements for identifying, qualifying, quantifying, and handling the project’s risks and opportunities?

- Status and analysis cycle. Is this a weekly or monthly process or a combination? What’s included? What are the expected performance analysis or reporting deliverables? These can be internal or external. Required outputs can help identify the data coding requirements. What are the thresholds or criteria for determining what is considered a significant time or cost variance?

- Integrating subcontractor schedule and cost data. How you do intend to do this? What is the level of detail required? What’s the process?

Keep this guidance as simple and straight forward as possible. Make it easy for project personnel to do the right things as they are developing the schedule and cost data for the project.

Proper toolset configuration. Is the toolset configuration hindering the data integration process? This is directly related to the data quality issues that surface when data is missing the necessary coding. Often when you identify missing coding or data disconnects, you quickly find issues with the toolset configuration settings, coding attributes, or how people populate the various user defined fields. For example, perhaps the scheduler didn’t realize they needed to use a defined WBS outline code instead of the default WBS field in Microsoft Project. Modeling the way you do business to create a useful data framework takes effort and up-front planning. With an integrated data framework in place, it is much easier to configure the toolsets, establish the utilities to share data between toolsets, and perform data traces.

Do you need help sorting through your data quality issues or figuring out the best way to prevent and resolve them? PrimePM has the cross-functional project control and toolset experts that can make a difference for your project personnel. We can help you identify the source of data quality issues quickly, create a useful integrated data framework for your environment, and configure your schedule and cost toolsets appropriately.